-

Why Your ‘Agile’ Process is Failing: The Case for Pre-Digital Rigidity

The Myth of Iteration In the modern startup ecosystem, we have fetishized the ‘Minimum Viable Product.’ We are told to ship fast, break things, and iterate our way to greatness. Yet, while we have gained the speed of a digital pulse, we have lost the structural integrity of 1950s engineering. We are sprinting toward growth,…

-

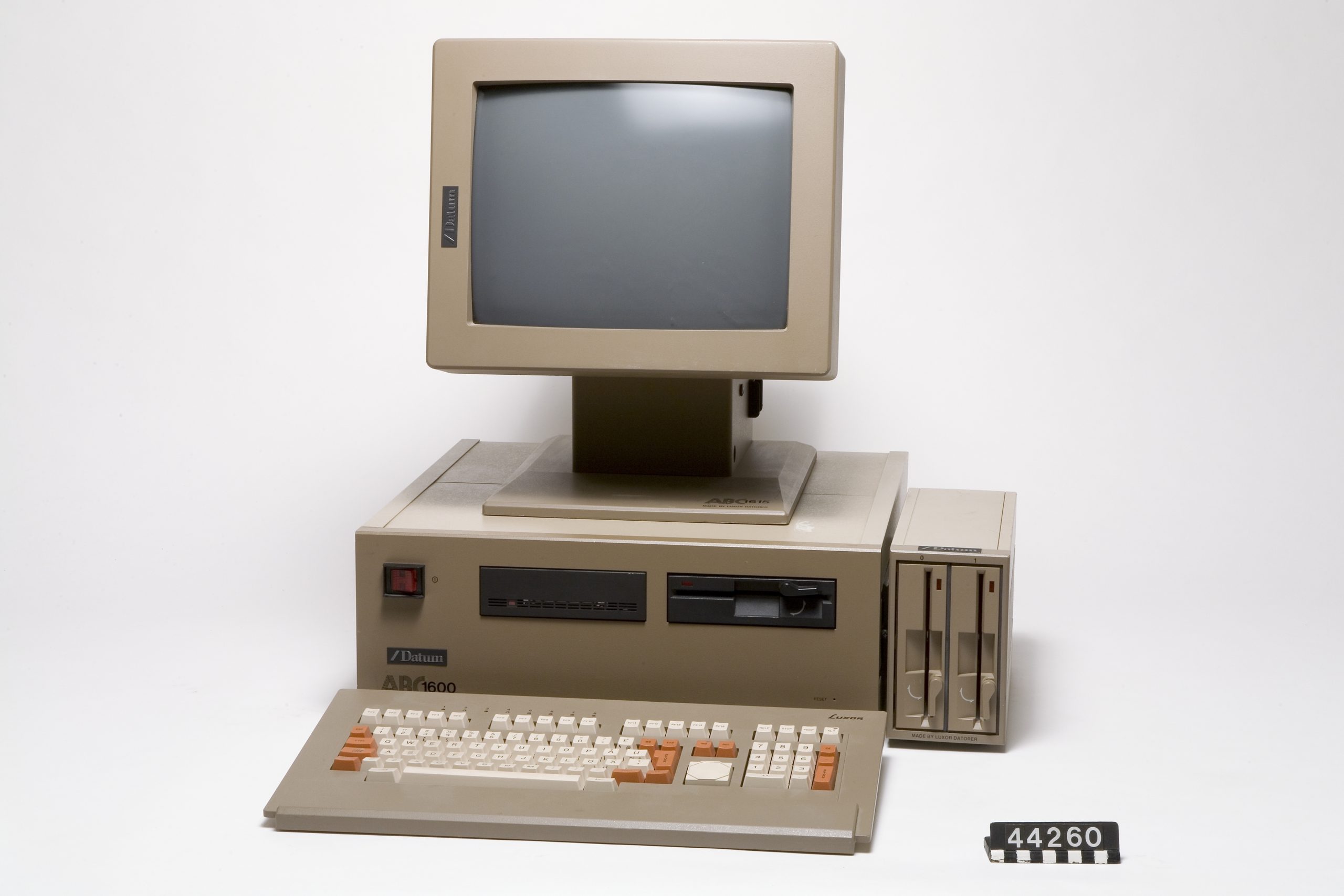

The Danger of Digital Elegance: Why Complexity is Killing Your Operational Velocity

In our previous exploration of 1955-era foundational architecture, we identified a critical truth: longevity is a feature, not a bug. While the original 1955 breakthroughs succeeded through radical modularity and linear logic, modern leadership has pivoted toward a dangerous obsession with ‘digital elegance.’ We build software stacks that look beautiful on a dashboard but are…

-

The Hidden Tax of ‘Ease’: Why SaaS Proliferation is Killing Your Strategic Focus

In the modern enterprise, we have fallen for a seductive trap: the promise that every workflow friction point can be solved by a subscription. When a process becomes cumbersome, the knee-jerk reaction is to procure a specialized tool. We tell ourselves we are ‘optimizing for speed,’ but in reality, we are paying a compounding tax…

-

The Architecture of Obsolescence: Why Your ‘Agile’ Stack is Your Biggest Liability

In the last decade, we have been sold a dangerous lie: that the ultimate competitive advantage is ‘agility.’ Founders are coached to build lean, replace tools weekly, and pivot their internal workflows with the same ease they switch project management software. But while the industry chases flexibility, it has accidentally institutionalized operational impermanence. You aren’t…

-

The Analog Advantage: Why Your Strategy Needs a ‘Manual’ Fail-Safe

In the digital age, we’ve outsourced our institutional memory to the cloud. At The Boss Mind, we often talk about the dangers of complexity, but there is a more insidious risk lurking in our offices: the atrophy of manual competence. When your entire operational backbone relies on an ecosystem of interconnected APIs, automations, and proprietary…

-

The Nomad Executive: Why Remote Work is Not Enough to Save Your Focus

The Myth of the ‘Anywhere’ Office For years, we’ve been told that liberating ourselves from the traditional office was the ultimate win for cognitive freedom. If the open-plan office is a bug, remote work was framed as the patch. But as we transition into a hybrid-dominant era, a dangerous assumption has taken hold: that physical…

-

The Ghost Project: How Zombie Initiatives Drain Your Best Talent

In our previous exploration of tactical abandonment, we identified the executive’s duty to kill failing ventures. But there is a silent killer lurking in the middle-management ranks that is far more insidious than the failing project: the ‘Ghost Project.’ A Ghost Project isn’t a failure—it’s a lukewarm, mid-performing initiative that is neither profitable enough to…

-

The Cognitive Parasite: Why Your Best Decisions Are Being Hijacked by ‘Narrative Mutagens’

In the framework of Cognitive Biosecurity, we often speak of defending the perimeter—blocking the viral outrage cycles and the dopamine loops that threaten our focus. But there is a more insidious layer to the problem that most leaders overlook: Narrative Mutagenesis. A narrative pathogen doesn’t always come from the outside as an attack; often, it…

-

The Fragility of the ‘Everything App’: Why Unbundling is the Ultimate Defense

We have spent the last decade obsessed with the ‘platform’ model—the idea that to be successful, a business must capture the entire value chain, becoming a one-stop-shop for every user need. From Amazon’s retail dominance to the rise of massive software suites, the goal was to achieve ‘everything-ness.’ But as global volatility reveals the brittleness…

-

The Emotional Liability: Why Your ‘Passion’ is Your Biggest Operational Risk

We have been sold a lie by the modern corporate cult of personality: the idea that passion is the engine of high-performance business. We idolize the ‘founder’s fire,’ the ‘visionary zeal,’ and the ‘infectious enthusiasm’ of the leader. We are told that if you aren’t emotionally invested in your project, you aren’t working hard enough.…