**Outline:**

1. **Main Title:** The Trust Architecture: Why Multi-Factor Verification is Essential for Reputation Systems

2. **Introduction:** Defining the “Trust Gap” in digital platforms and why traditional star ratings are failing.

3. **Key Concepts:** Deconstructing Reputation Systems, the mechanics of manipulation (Sybil attacks, review farming), and the role of Multi-Factor Verification (MFV).

4. **Step-by-Step Guide:** Implementing an MFV-based reputation framework.

5. **Examples/Case Studies:** Analyzing platforms that successfully integrate identity and behavioral signals.

6. **Common Mistakes:** Over-reliance on single metrics, friction vs. security trade-offs, and ignoring metadata.

7. **Advanced Tips:** Moving toward decentralized identity (DID) and reputation decay models.

8. **Conclusion:** The future of authentic digital interaction.

The Trust Architecture: Why Multi-Factor Verification is Essential for Reputation Systems

Introduction

In the digital economy, trust is the primary currency. Whether it is a peer-to-peer rental marketplace, a freelance platform, or a decentralized finance protocol, the ability to assess the credibility of a participant is paramount. However, we are currently facing a crisis of authenticity. Traditional reputation systems—often built on simple “star ratings” or binary upvote/downvote counts—have become increasingly easy to manipulate.

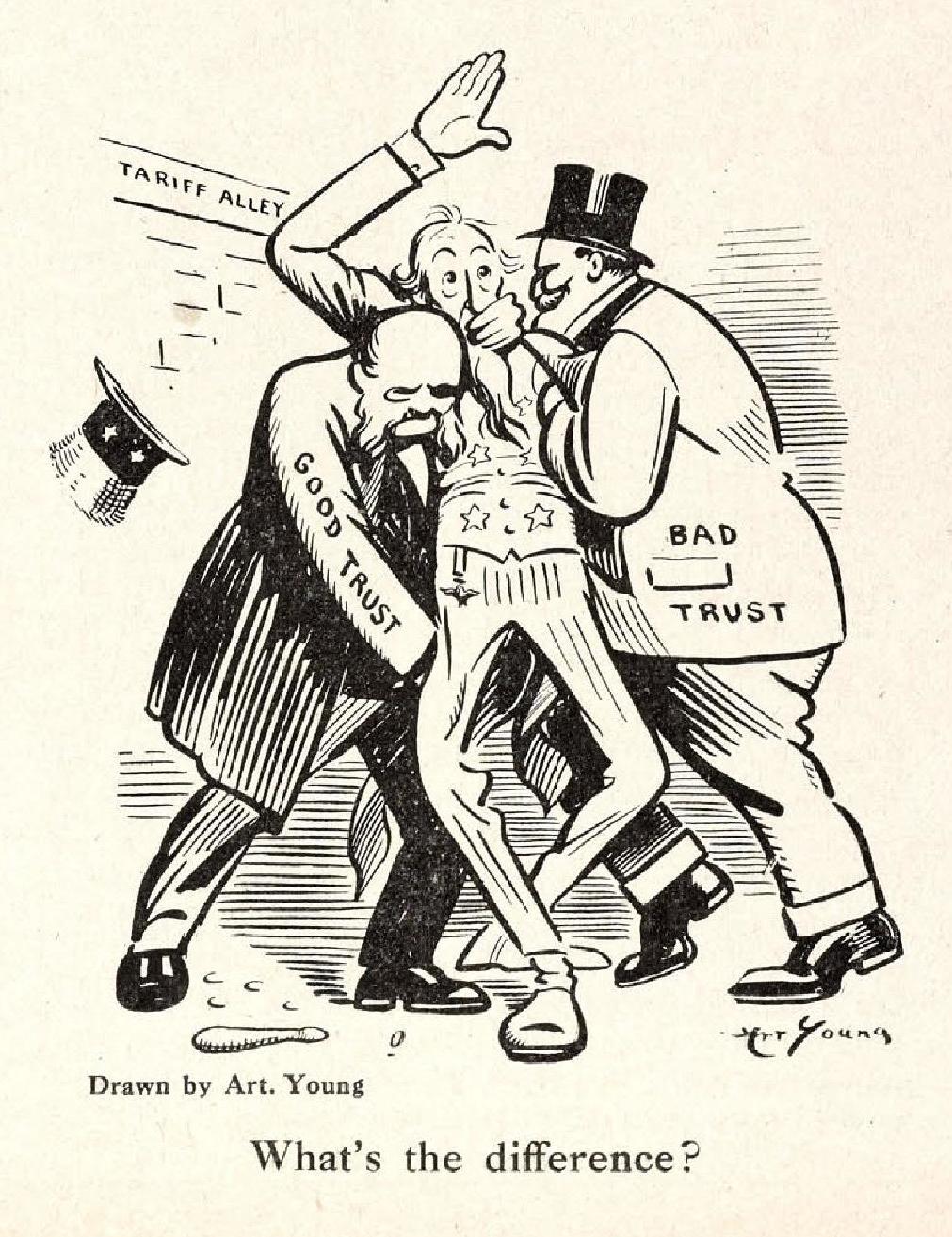

Bad actors have industrialized the process of reputation gaming through bot farms, Sybil attacks, and paid review services. When a system is transparent enough to be gamed, it loses its utility, leading to the “lemon market” problem where high-quality participants exit, and low-quality actors dominate. To restore integrity, reputation systems must shift away from singular, easily spoofed metrics toward a robust model of multi-factor verification (MFV).

Key Concepts

A reputation system is an algorithm designed to aggregate past interactions to predict future behavior. Historically, these systems were “permissionless” by default—anyone could create an account and immediately start contributing to the aggregate score. This is precisely where the vulnerability lies.

Multi-Factor Verification (MFV) in the context of reputation means that a user’s “reputation score” is not derived from a single data point (like a review), but from a weighted composite of distinct, verifiable signals. These factors typically fall into three categories:

- Identity Signals: Verification of the participant behind the account (e.g., government ID, social graph connectivity, or biometric uniqueness).

- Behavioral Signals: Evidence of actual, high-cost actions (e.g., a completed transaction, a duration of membership, or a staked financial deposit).

- Network Signals: The reputation of the participants who have interacted with the user. In a robust system, an endorsement from a high-reputation node carries more weight than an endorsement from a new, unverified user.

By requiring multiple, independent proofs of legitimacy, the cost of manipulating the system increases exponentially. An attacker would not only need to create a fake account but would also need to simulate a verifiable identity and a history of expensive, genuine interactions.

Step-by-Step Guide

Building a reputation system resistant to manipulation requires a systematic approach to data collection and weighting. Follow these steps to architect a more resilient framework:

- Establish the Identity Anchor: Require a baseline level of verification. This could be a phone number, a verified email, or a more sophisticated Zero-Knowledge Proof (ZKP) that confirms the user is a unique human without exposing sensitive personal data.

- Weight Actions by Economic or Temporal Cost: Not all interactions are equal. A review left by someone who spent money on the platform should be weighted significantly higher than a review from a guest user. Implement a “Skin in the Game” mechanism where users must stake tokens or complete a transaction to unlock reputation-granting capabilities.

- Implement Cross-Platform Correlation: If possible, allow users to link existing, long-standing accounts from established platforms (like LinkedIn or GitHub). This acts as a “proof of history,” making it harder for a brand-new bot to masquerade as an expert.

- Deploy Decay and Velocity Functions: Reputation should not be static. Implement a decay function where older ratings become less relevant over time. Additionally, monitor the “velocity” of activity; if a user goes from zero to one hundred reviews in an hour, the system should automatically flag the account for manual review or restrict its reputation-granting influence.

- Incorporate Peer-Weighted Scoring: Use a PageRank-style algorithm where the reputation of the rater influences the weight of the rating. This prevents “siloed” manipulation where a group of fake accounts boosts each other’s scores.

Examples or Case Studies

The most successful implementations of MFV-based reputation are found in industries where the cost of failure is high. For instance, top-tier freelance platforms have moved beyond simple stars. They now require verified tax documentation, a portfolio history, and a track record of completed, paid invoices. A freelancer with a five-star rating but zero completed projects will not show up in top search results, effectively neutralizing the “empty account” attack vector.

Another example is found in blockchain-based governance. Systems like “Proof of Humanity” require users to submit a video of themselves and have that submission endorsed by existing members. This creates a multi-factor loop: you need an identity, you need a social endorsement, and you need to stake capital. If a member is found to be a bot, the person who endorsed them loses their stake. This creates a powerful economic incentive to keep the reputation system clean.

“Trust is not a static score; it is a dynamic relationship that must be continuously verified against the reality of behavior and the weight of social proof.”

Common Mistakes

- The “One-Size-Fits-All” Metric: Relying on a single, public-facing score is dangerous. It incentivizes users to optimize for that number rather than the quality of their work. Always use a composite score that is hidden from the user to prevent gaming.

- Ignoring Metadata: Failing to analyze the “how” of a rating is a critical error. If you don’t track IP addresses, device fingerprints, or the time-of-day of interactions, you are leaving the door wide open for automated scripts.

- Prioritizing Frictionless Onboarding: While ease of use is important, total lack of friction is the enemy of security. If it takes three seconds to create a reputation-generating account, it will be exploited. Find the “Goldilocks zone”—enough friction to deter bad actors, but not so much that you drive away legitimate users.

- Static Scoring: A reputation score that never changes is a liability. Reputation should be a living, breathing metric that reflects current performance.

Advanced Tips

To take your reputation system to the next level, consider the implementation of Reputation Decay and Negative Feedback Loops. Just as a credit score is impacted by missed payments, a reputation system should allow for “negative weighting.”

Furthermore, explore the use of Zero-Knowledge Proofs (ZKP). This allows users to prove they have a high reputation on another platform without revealing their actual identity or transaction history. This preserves privacy while maintaining the security benefits of multi-factor verification.

Finally, consider Community Moderation. Even the best algorithms have edge cases. Empowering your most trusted, long-standing users to flag anomalies—and rewarding them for doing so—creates a human-in-the-loop layer that no bot can easily penetrate.

Conclusion

The transition from simple, vulnerable reputation systems to multi-factor, resilient architectures is not just a technical upgrade—it is a necessity for the survival of online communities. By anchoring reputation in identity, behavior, and social proof, we can create environments where honest participants are recognized and bad actors are effectively silenced.

The goal is to make the cost of manipulation prohibitively high. When you require attackers to spend significant time, money, and social capital just to gain a marginal, temporary advantage, they will inevitably move on to easier targets. By following these principles, you ensure that your platform remains a bastion of trust, authenticity, and long-term value.

Leave a Reply